👂 Active learning for text classification with small-text#

In this tutorial, you will learn how to set up a complete active learning loop with a Hugging Face transformer:

Use the excellent small-text library to set up your active learner;

Use a Rubrix listener to build and start an active learning loop;

Use the Rubrix UI to annotate examples and learn actively;

Introduction#

Active learning is a special case of machine learning in which a learning algorithm can interactively query a user (or some other information source) to label new data points with the desired outputs. Wikipedia

Supervised machine learning often requires large amounts of labeled data that are expensive to generate. Active Learning (AL) systems attempt to overcome this labeling bottleneck. The underlying idea is that not all data points are equally important for training the model. The AL system tries to query only the most relevant data from a pool of unlabeled data to be labeled by a so-called oracle, which is often a human annotator. Therefore, AL systems are usually much more sample efficient and need far less training data than traditional supervised systems.

This tutorial will show you how to incorporate Rubrix into an active learning workflow involving a human in the loop. We will build a simple text classifier by combining the active learning framework small-text and Rubrix. Hugging Face’s transformers will provide the classifier we will embed in an active learner from small-text. Rubrix excels in making you the oracle that conveniently teaches the model via an intuitive UI.

Setup#

Rubrix is a free and open-source tool to explore, annotate, and monitor data for NLP projects.

If you are new to Rubrix, check out the Github repository ⭐.

If you have not installed and launched Rubrix yet, check the Setup and Installation Guide.

For this tutorial, we also need some optional and third-party libraries that you can install via pip:

[ ]:

%pip install "rubrix[listeners]" "datasets~=2.5.0" "small-text>=1.1.0" "transformers[torch]"

The TREC dataset#

For this tutorial, we will use the well-known TREC dataset containing 6000 labeled questions; 5500 in the training and 500 in the test split. This dataset can be turned into a text classification task, in which a model must predict one of the six coarse labels given the question. The labels consist of ABBREVIATION (ABBR), ENTITY (ENTY), DESCRIPTION (DESC), HUMAN (HUM), LOCATION (LOC), and NUMERIC VALUE (NUM).

Let us load the dataset from the Hugging Face Hub:

[ ]:

import datasets

trec = datasets.load_dataset('trec', version=datasets.Version("2.0.0"))

Preprocessing the dataset#

We first need to wrap the dataset in a specific data class provided by small-text, the excellent active learning framework we will use in this tutorial. Since we will choose a Hugging Face transformer in the active learner, small-text will expect a TransformersDataset object that already contains the tokenized input text.

In order to build a TransformersDataset object, we first need a tokenizer:

[ ]:

import torch

from transformers import AutoTokenizer

# Choose transformer model: In non-gpu environments we use a tiny model to reduce the runtime

if not torch.cuda.is_available():

transformer_model = "prajjwal1/bert-tiny"

else:

transformer_model = "bert-base-uncased"

# Init tokenizer

tokenizer = AutoTokenizer.from_pretrained(transformer_model)

With this, we can create a TransformersDataset by calling TransformersDataset.from_arrays() which expects a list of texts, a numpy array (which indicates single-label classification), a tokenizer, and lastly the possible (integer values) of target labels within this dataset.

[ ]:

import numpy as np

from small_text import TransformersDataset

num_classes = trec["train"].features["coarse_label"].num_classes

target_labels = np.arange(num_classes)

train_text = [row["text"] for row in trec["train"]]

train_labels = np.array([row["coarse_label"] for row in trec["train"]])

# Create the dataset for small-text

dataset = TransformersDataset.from_arrays(train_text, train_labels, tokenizer, target_labels=target_labels)

We will also create a test dataset to track the performance of the transformer model during the active learning loop.

[ ]:

# Create test dataset

test_text = [row["text"] for row in trec["test"]]

test_labels = np.array([row["coarse_label"] for row in trec["test"]])

dataset_test = TransformersDataset.from_arrays(test_text, test_labels, tokenizer, target_labels=np.arange(num_classes))

Setting up the active learner#

Now that we have our datasets ready let’s set up the active learner. For this, we need two components:

the classifier to be trained;

the query strategy to obtain the most relevant data;

In our case, we choose a Hugging Face transformer as the classifier and a tie-breaker as the query strategy. The latter selects instances of the data pool with a small margin between the two most likely predicted labels.

[ ]:

from small_text import (

BreakingTies,

PoolBasedActiveLearner,

TransformerBasedClassificationFactory,

TransformerModelArguments

)

# Define our classifier

clf_factory = TransformerBasedClassificationFactory(

TransformerModelArguments(transformer_model),

num_classes=6,

# If you have a cuda device, specify it here.

# Otherwise, just remove the following line.

# kwargs={"device": "cuda"}

)

# Define our query strategy

query_strategy = BreakingTies()

# Use the active learner with a pool containing all unlabeled data

active_learner = PoolBasedActiveLearner(clf_factory, query_strategy, dataset)

Since most query strategies, including ours, require a trained model, we randomly draw a subset from the data pool to initialize our AL system. After obtaining the labels for this batch of instances, the active learner will use them to create the first classifier.

[ ]:

from small_text import random_initialization

import numpy as np

# Fix seed for reproducibility

np.random.seed(42)

# Number of samples in our queried batches

NUM_SAMPLES = 20

# Randomly draw an initial subset from the data pool

initial_indices = random_initialization(dataset, NUM_SAMPLES)

Rubrix and you: the perfect oracle#

With our active learner ready, it is time to teach it. We first create a Rubrix dataset to log and label the initial random batch queried by the active learner.

[ ]:

import rubrix as rb

# Choose a name for the dataset

DATASET_NAME = "trec_with_active_learning"

# Define labeling schema

labels = trec["train"].features["coarse_label"].names

settings = rb.TextClassificationSettings(label_schema=labels)

# Create dataset with a label schema

rb.configure_dataset(name=DATASET_NAME, settings=settings)

# Create records from the initial batch

records = [

rb.TextClassificationRecord(

text=trec["train"]["text"][idx],

metadata={"batch_id": 0},

id=idx,

)

for idx in initial_indices

]

# Log initial records to Rubrix

rb.log(records, DATASET_NAME)

Before switching to the Rubrix UI to label the records, we will set up the active learning loop. For this, we will use the listener decorator from Rubrix. The loop will run automatically once all records of a batch are labeled (see the query and condition argument of the decorator). It will trigger the classifier’s training, query a new batch from the active learner and log it to Rubrix. We will also keep track

of the accuracy of the current classifier by evaluating it on our test set.

[ ]:

from rubrix.listeners import listener

from sklearn.metrics import accuracy_score

# Define some helper variables

LABEL2INT = trec["train"].features["coarse_label"].str2int

ACCURACIES = []

# Set up the active learning loop with the listener decorator

@listener(

dataset=DATASET_NAME,

query="status:Validated AND metadata.batch_id:{batch_id}",

condition=lambda search: search.total==NUM_SAMPLES,

execution_interval_in_seconds=3,

batch_id=0

)

def active_learning_loop(records, ctx):

# 1. Update active learner

print(f"Updating with batch_id {ctx.query_params['batch_id']} ...")

y = np.array([LABEL2INT(rec.annotation) for rec in records])

# initial update

if ctx.query_params["batch_id"] == 0:

indices = np.array([rec.id for rec in records])

active_learner.initialize_data(indices, y)

# update with the prior queried indices

else:

active_learner.update(y)

print("Done!")

# 2. Query active learner

print("Querying new data points ...")

queried_indices = active_learner.query(num_samples=NUM_SAMPLES)

new_batch = ctx.query_params["batch_id"] + 1

new_records = [

rb.TextClassificationRecord(

text=trec["train"]["text"][idx],

metadata={"batch_id": new_batch},

id=idx,

)

for idx in queried_indices

]

# 3. Log the batch to Rubrix

rb.log(new_records, DATASET_NAME)

# 4. Evaluate current classifier on the test set

print("Evaluating current classifier ...")

accuracy = accuracy_score(

dataset_test.y,

active_learner.classifier.predict(dataset_test),

)

ACCURACIES.append(accuracy)

ctx.query_params["batch_id"] = new_batch

print("Done!")

print("Waiting for annotations ...")

Starting the active learning loop#

Now we can start the loop and switch to the Rubrix UI.

[ ]:

active_learning_loop.start()

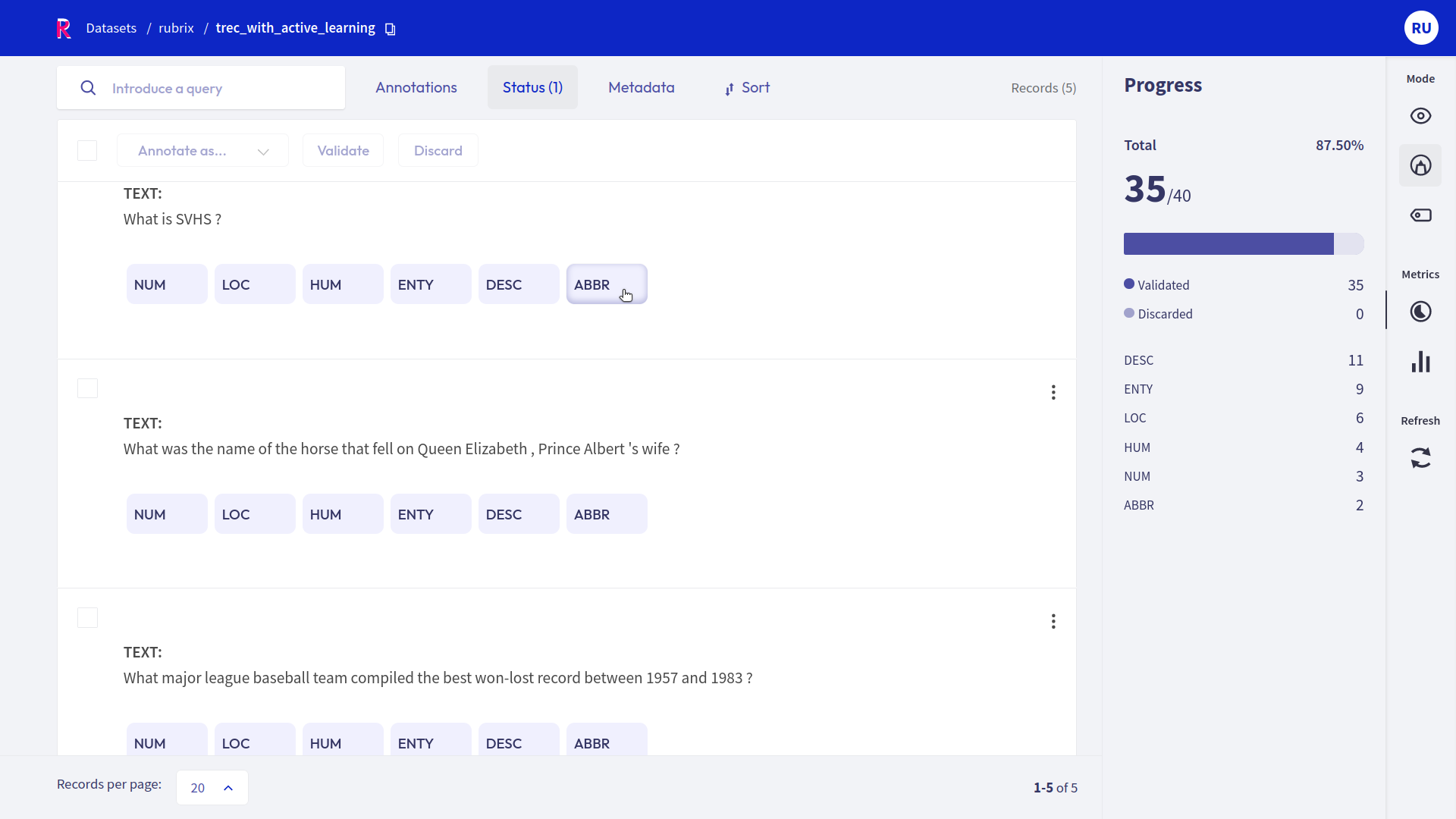

In the Rubrix UI, we will set the number of records per page to 20 since it is also our chosen batch size. Furthermore, we will use the Status filter to filter out already annotated records. Now, all we have to do is to annotate the displayed records. Once annotating everything, the classifier’s training will be automatically triggered.

After a few seconds, you should see the newly queried batch when pressing the Refresh button. The training can take longer depending on your machine and whether you have a CUDA device. You can always check the status of the active learning loop from your notebook.

Can we stop?#

After a few iterations, we can check the accuracy of the current classifier and plot its evaluation.

[ ]:

import pandas as pd

pd.Series(ACCURACIES).plot(xlabel="Iteration", ylabel="Accuracy");

We should achieve an accuracy of at least 0.8 after around 12 iterations, corresponding to roughly 260 annotated records. The stopping criterion is ultimately up to you, and you can choose more sophisticated criteria like the KappaAverage implemented in small-text.

[ ]:

active_learning_loop.stop()

Summary#

In this tutorial, we saw how you could embed Rubrix in an active learning loop involving a human in the loop. We relied on small-text to use a Hugging Face transformer within an active learning setup. In the end, we gathered a sample-efficient data set by annotating only the most informative records for the model.

Rubrix makes it very easy to use a dedicated annotation team or subject matter experts as an oracle for your active learning system. They will only interact with the Rubrix UI and do not have to worry about training or querying the system. We encourage you to try out active learning in your next project and make your and your annotator’s life a little easier.

Next steps#

⭐ Rubrix Github repo to stay updated.

📚 Rubrix documentation for more guides and tutorials.

🙋♀️ Join the Rubrix community! A good place to start is our slack channel.